Production_Grade_AI_App_Delivery_with_ChatGPT_reissued (1)

Scene 1 (0s)

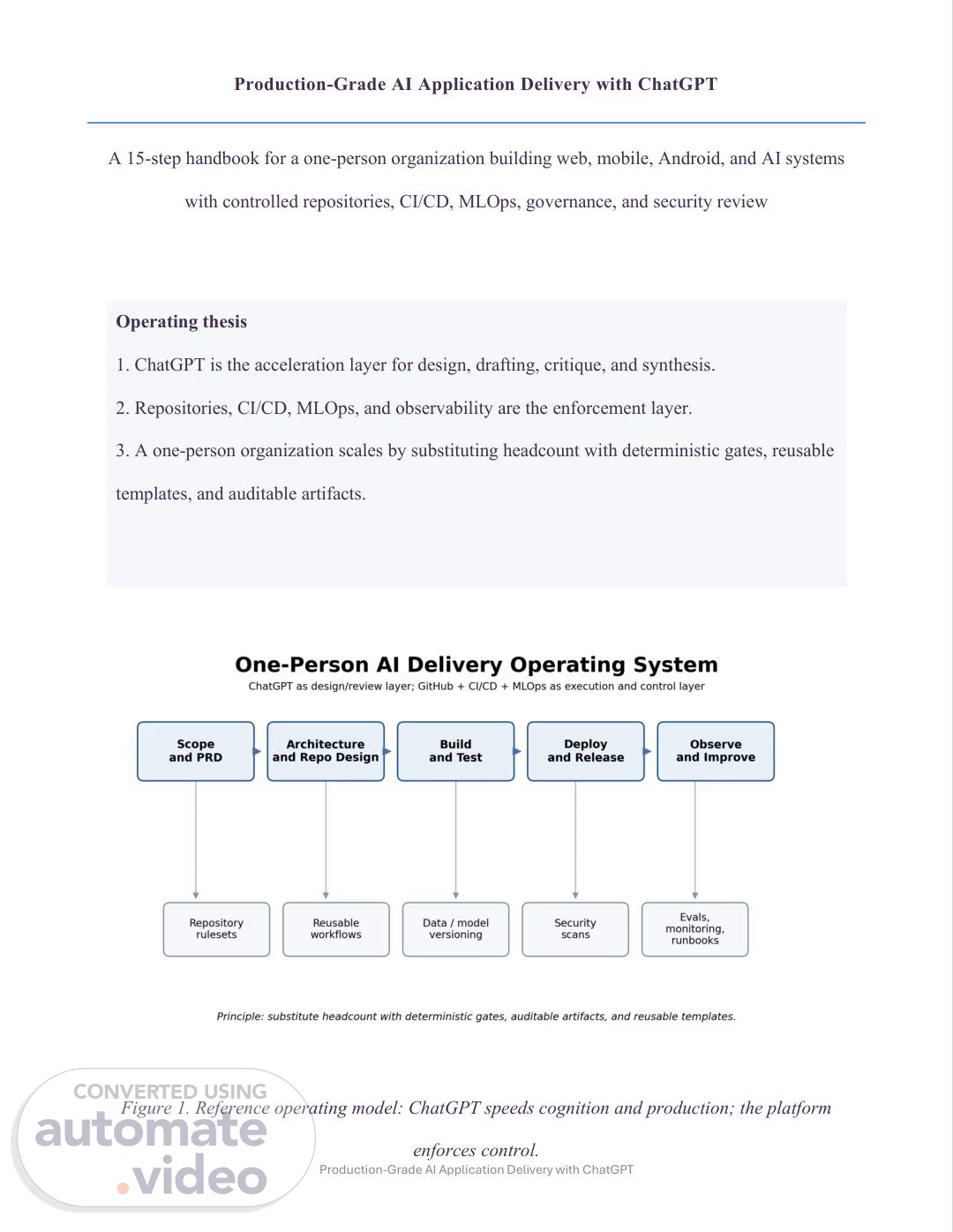

Production-Grade AI Application Delivery with ChatGPT Production-Grade AI Application Delivery with ChatGPT A 15-step handbook for a one-person organization building web, mobile, Android, and AI systems with controlled repositories, CI/CD, MLOps, governance, and security review Operating thesis 1. ChatGPT is the acceleration layer for design, drafting, critique, and synthesis. 2. Repositories, CI/CD, MLOps, and observability are the enforcement layer. 3. A one-person organization scales by substituting headcount with deterministic gates, reusable templates, and auditable artifacts. Figure 1. Reference operating model: ChatGPT speeds cognition and production; the platform enforces control..

Scene 2 (29s)

Production-Grade AI Application Delivery with ChatGPT.

Scene 3 (36s)

Production-Grade AI Application Delivery with ChatGPT Executive Summary A one-person organization can ship software at a professional level only if it replaces missing headcount with deterministic controls. The operating model in this handbook is built on a simple separation. ChatGPT is the design, synthesis, drafting, and review layer. Git repositories, CI/CD, infrastructure as code, security scanners, model registries, deployment gates, and observability systems remain the execution and control layer. That separation matters because language models accelerate thinking and production, yet they do not replace the need for immutable evidence, repeatable builds, reversible deployments, and auditable change records. The practical implication is that the solo builder should avoid imitating the ceremony of a large team. You do not need performative approval chains or elaborate meetings with yourself. You do need branch rules, reusable workflows, short-lived credentials, policy-as-code where it matters, versioned datasets and models, explicit rollback paths, and a small set of operating cadences that force discipline. The target state is not “move fast and hope.” The target state is “move quickly inside a narrow set of well-defined rails.” ChatGPT is useful across the entire SDLC when it is given bounded context and a narrow task. Use one project or workspace per product, repository, or major stream of work. Load the PRD, architecture decision records, threat model, data contracts, runbooks, and prior postmortems into that context so the model reasons against the same operating baseline on every iteration. Use it to draft requirements, challenge assumptions, generate scaffolding, critique pull requests, derive test cases, write migration plans, draft risk memos, and summarize incidents. Do not make ChatGPT the source of truth for deployment state, compliance status, or production evidence. Those belong in systems that timestamp, version, verify, and alert. This handbook uses the classic 15-step SDLC as the organizing spine. Each step is adapted for web, mobile, Android, iOS, backend, and AI feature delivery. Every section explains what the step is trying.

Scene 4 (1m 41s)

Production-Grade AI Application Delivery with ChatGPT to produce, how ChatGPT should be used, how to implement the step at production scale for a single operator, and what “done” looks like. The goal is to help one person build a controlled software factory, not just a pile of code. Assumptions and Design Constraints Assumptions for the reference design: 1. One primary operator owns product, engineering, operations, and model delivery. 2. The system may include a conventional web or mobile stack plus one or more AI functions such as retrieval, agents, ranking, classification, generation, or forecasting. 3. Budget discipline matters, so the default recommendation favors managed services, serverless or containerized deployment, and reusable automation over staffing-heavy controls. 4. GitHub is used as the control plane for repositories and CI/CD, DVC or equivalent for data and model versioning, MLflow for experiment tracking and model lifecycle, Terraform for infrastructure, and OpenTelemetry-compatible observability for traces, metrics, and logs. 5. Where a control cannot be made independent because there is only one person, the substitute is a machine-enforced gate, an evidence artifact, or a time-delayed promotion rule. Preflight: Reference Production Stack Reference production stack for a solo AI software factory The exact vendor mix can change, though the operating roles should stay stable. Keep one tool per role unless there is a compelling reason to split. A practical baseline is: GitHub for repo control and CI/CD, Terraform for infrastructure declarations, a managed cloud runtime for web/API services, a managed database, object storage for artifacts and datasets, DVC for data or model snapshots, MLflow for experiment and model lineage, a secret manager, OpenTelemetry-compatible telemetry export, and.

Scene 5 (2m 46s)

Production-Grade AI Application Delivery with ChatGPT ChatGPT for drafting, review, synthesis, and operational analysis. If you already use equivalent tools, preserve the roles even if the product names differ. The stack should be designed around five questions. First, where is the source of truth for change control? That is the repository and CI/CD history. Second, where is the source of truth for runtime state? That is the cloud control plane and observability stack. Third, where is the source of truth for model lineage? That is DVC plus MLflow or the equivalent. Fourth, where is the source of truth for policy and governance? That is the versioned docs, risk register, and security findings. Fifth, where is the source of truth for reasoning support? That is ChatGPT, bounded by the first four sources rather than replacing them. A one-person organization should optimize for reversibility. Choose services with strong APIs, clear audit logs, exportable data, and manageable cost curves. Prefer managed identity over custom auth unless the product itself is an identity product. Prefer managed queues and managed databases over self-hosted clusters. Prefer a single observability path over multiple partial dashboards. Prefer an application topology that can be explained in one page. Complexity should be earned by load, latency, or compliance, not by aspiration. Common anti-patterns to avoid: 1. Two deployment methods for the same service. 2. Prompt logic embedded in UI code or scattered across notebooks. 3. Data artifacts stored manually in cloud buckets with no linkage to commits. 4. Static cloud credentials in CI/CD. 5. Production changes that are not attached to a pull request, workflow run, and release manifest. 6. Alerting without runbooks. 7. Evals that are run only before demos. 8. Architecture choices copied from large companies with very different staffing assumptions..

Scene 6 (3m 51s)

Production-Grade AI Application Delivery with ChatGPT The practical rule is that every major system surface needs one controlling artifact. Repositories need rulesets and templates. Infrastructure needs Terraform state and plans. Data and models need versioning and lineage. Releases need manifests and provenance. Governance needs a risk register and evidence packet. ChatGPT should help you author and critique those artifacts quickly. It should not be the only place they exist. Minimal control-oriented stack Role Recommended default Why it scales for one person Repo control GitHub repository + rulesets + PR templates Single control plane for code, reviews, workflows, and release evidence. CI/CD GitHub Actions reusable workflows Low-ops automation with strong linkage to commits, artifacts, and environments. Infrastructure Terraform Declarative change control and repeatable environment creation. Runtime Managed container or serverless platform Reduces undifferentiated ops and shortens recovery time. Data/model lineage DVC + object storage Binds large data and model artifacts to Git history. Experiment and registry MLflow Tracks runs, metrics, artifacts, lineage, and promotion state. Secrets and Cloud secret manager + Avoids long-lived CI/CD secrets and.

Scene 7 (4m 37s)

Production-Grade AI Application Delivery with ChatGPT identity OIDC federation centralizes credential hygiene. Telemetry OpenTelemetry-compatible stack Unifies traces, metrics, and logs across app and model surfaces. Figure 2. Illustrative control coverage matrix. Use it to think about where release gates and security checks concentrate. Handbook Structure 1. Problem Definition and Opportunity Framing 2. Requirements Engineering 3. System Architecture Design 4. UX/UI Design 5. AI/ML System Development.

Scene 8 (4m 59s)

Production-Grade AI Application Delivery with ChatGPT 6. Software Development 7. Security Engineering and Security Review 8. Testing and Quality Assurance 9. DevOps, CI/CD, and Controlled Release Engineering 10. Deployment and Runtime Configuration 11. Monitoring, Observability, and Incident Response 12. Maintenance, Reliability Engineering, and Continuous Improvement 13. Governance, Compliance, and Ethical Control 14. Documentation and Knowledge Management 15. Product Lifecycle Management and Scaling Roadmap 1. Problem Definition and Opportunity Framing Objective. Convert a vague idea into a bounded problem statement, business case, and success metric set. With ChatGPT. Ask ChatGPT to generate a decision memo with the following fixed fields: user segment, job-to-be-done, problem severity, measurable baseline, counterfactual alternatives, expected economic upside, downside risk, and kill criteria. Then ask it to produce an adversarial review of that memo. The fastest way to waste a solo operator’s time is to start building before the problem statement can survive critique. Production-grade implementation. Maintain a product thesis document in the repository under /docs/product/thesis.md. Treat it as versioned scope. Each change to the thesis should go through a pull request like code. Use a standard problem-framing template: problem, affected users, workflow today, pain score, measurable target, constraints, regulatory concerns, failure modes, and non-goals. Keep a.

Scene 9 (5m 56s)

Production-Grade AI Application Delivery with ChatGPT short decision log so that later architecture and governance choices can be traced to the original business need. For AI features, define the atomic decision you want the model to support. Examples: classify an inbound ticket, rank retrieval results, draft a response, detect fraud signals, summarize a patient note, or forecast demand. The clearer the atomic decision, the easier the eval design later. Scaling pattern for one person. Create a single “intake” workflow in ChatGPT. Feed it rough notes, screenshots, tickets, voice transcripts, or market observations. Ask it to normalize those into a one- page problem brief. Once the brief exists, store it in the repository and stop debating in chat. From that point forward, the repository document is canonical and ChatGPT becomes an editor and reviewer, not the living source of scope. This simple separation prevents drift between what the model suggested in one conversation and what the system is actually being built to do. Instruction points: • Define one primary user and one primary workflow before defining features. • Specify the top three measurable outcomes and their units. • Define what failure means in business terms, not only model terms. • Write down non-goals early to prevent uncontrolled scope expansion. • For AI use cases, state where human override is required. Done when: • A versioned thesis and success-metric document exists. • Baseline metrics and kill criteria are explicit. • Key risks, assumptions, and non-goals are documented. • ChatGPT can restate the problem in one paragraph without ambiguity because the source material is clean..

Scene 10 (7m 0s)

Production-Grade AI Application Delivery with ChatGPT Figure 1. Reference operating model: ChatGPT speeds cognition and production; the platform enforces control. 2. Requirements Engineering Objective. Translate the problem into functional, non-functional, regulatory, and AI-specific requirements that can be tested. With ChatGPT. Use ChatGPT as a structured requirements analyst. Provide the thesis, target users, operating constraints, platform targets, and any standards you must satisfy. Ask it to create user stories, edge cases, API contracts, mobile-specific requirements, data retention rules, and a requirement traceability matrix. Then ask it to produce an “ambiguity audit” that flags terms such as fast, secure, reliable, accurate, intuitive, production-ready, and scalable unless they are quantified. Production-grade implementation. Store requirements as text in /docs/requirements/ with separate files for functional requirements, non-functional requirements, AI requirements, and external interfaces. Give every requirement a stable identifier. That allows test cases, security controls, and deployment gates to point to something concrete. For web and mobile systems, the minimum non-.

Scene 11 (7m 46s)

Production-Grade AI Application Delivery with ChatGPT functional set should cover latency, availability, authentication, authorization, auditability, privacy, localization if relevant, accessibility, backup and restore expectations, and observability. For AI functions, add requirements for data provenance, evaluation thresholds, fallback behavior, prompt or policy versioning, model update cadence, prompt injection resistance where relevant, and escalation paths when confidence is low. Scaling pattern for one person. Use ChatGPT to derive acceptance criteria from each requirement and save those criteria next to the requirement. That creates testability early and reduces rework. Do not keep requirements trapped in a note-taking app without version control. A one-person organization becomes fragile when core decisions exist only in memory or chat history. Versioning requirements in the repository makes later architecture, testing, and incident review much easier. Instruction points: • Assign a unique identifier to every requirement. • For every requirement, define the triggering event, expected behavior, and measurable acceptance condition. • Separate product desirables from hard operational constraints. • Add explicit degraded-mode requirements for AI or third-party outages. • Record which requirements are regulatory, contractual, or self-imposed policy. Done when: • Requirements are versioned, testable, and uniquely identified. • AI-specific controls are defined alongside standard product requirements. • Every major requirement has acceptance criteria and an owner, even if the owner is the same person..

Scene 12 (8m 43s)

Production-Grade AI Application Delivery with ChatGPT 3. System Architecture Design Objective. Produce a system architecture that is simple enough for one person to run and strong enough to enforce change control, scale, and recovery. With ChatGPT. Give ChatGPT the requirements and ask for three architecture candidates: lowest-cost, lowest-ops, and highest-control. Ask it to compare failure domains, operational burden, data movement, secrets exposure, vendor lock-in, and rollback complexity. Then ask for a concise architecture decision record for the chosen option. Production-grade implementation. Favor architectures that minimize undifferentiated operations. For most one-person organizations, that means managed databases, managed identity, object storage, managed observability, serverless jobs where possible, and containerized stateless services when custom runtime control is needed. Use Terraform to define infrastructure as code and keep environment drift visible. For AI systems, split the stack into at least four logical planes: application plane, model or agent plane, data plane, and control plane. The control plane includes CI/CD, secrets, identity federation, policy files, evaluation jobs, and deployment metadata. That plane must be explicit because it is what makes a solo operation governable. Controlled repositories start here, not later. Decide whether you need a monorepo or a small set of service repositories. A monorepo is usually the default for a one-person operator because it reduces coordination overhead, centralizes workflows, and makes cross-cutting changes easier. Use a clear top- level layout such as: /apps/web, /apps/mobile, /services/api, /ml, /infra, /docs, /ops. Reserve /docs/adr for architecture decision records and /ops for runbooks, checklists, and release procedures. Use repo rulesets or branch protection so main is immutable except through pull requests with required status checks. GitHub rulesets, branch protections, CODEOWNERS, deployment environments, and OIDC- based cloud authentication are the core repository control primitives in this handbook [R6][R7][R8][R9]..

Scene 13 (9m 48s)

Production-Grade AI Application Delivery with ChatGPT Scaling pattern for one person. Machine-enforced review matters more than symbolic review. In a single-operator setting, a second human approver may not exist, so quality must come from required checks, deterministic templates, signed artifacts, environment protection, and release evidence. Use ChatGPT to generate the first draft of architecture diagrams, ADRs, API boundaries, and failure-mode analyses. Commit the finalized artifacts to the repository. The design rule is simple: no important boundary should exist only in your head. Instruction points: • Prefer one repository unless there is a real need to isolate release cadence or access. • Separate runtime data paths from control paths. • Define rollback before the first deployment. • Keep infrastructure declarative and versioned. • Write one ADR for each irreversible decision: repo structure, database choice, model serving path, identity approach, deployment topology. Done when: • Architecture diagrams, ADRs, repo layout, and environment topology are versioned. • Mainline protection and deployment environment controls are enabled. • Cloud authentication uses short-lived credentials via OIDC instead of long-lived static secrets where supported [R9]. 4. UX/UI Design Objective. Convert requirements into user flows, screens, interaction rules, and failure messaging that support real work rather than cosmetic demos. With ChatGPT. Use ChatGPT to transform the requirements into task-oriented user journeys, screen inventories, copy drafts, error-state microcopy, accessibility considerations, and edge-case scenarios..

Scene 14 (10m 47s)

Production-Grade AI Application Delivery with ChatGPT For AI features, ask for trust-preserving interaction patterns: confidence cues, source visibility, retry options, escalation to manual flow, and explicit user consent where generation or automation could materially affect outcomes. Production-grade implementation. Maintain design artifacts in versioned files. Even if you design in a GUI tool, keep exported screen specs, interaction notes, and design tokens synchronized in the repository. For mobile and Android specifically, define offline behavior, sync conflict behavior, device permission rationale, push notification strategy, and battery/network constraints. For web, define responsive breakpoints, keyboard navigation, semantic roles, and input validation rules. For AI interactions, specify the contract between user intent, model response, and system safeguards. If the model can produce a wrong answer, the interface must make correction and recovery cheaper than blind acceptance. Scaling pattern for one person. Ask ChatGPT to generate “negative journey” walkthroughs: What does the user see if the model times out? If the retrieval set is empty? If a document fails policy checks? If the app cannot reach the backend? Solo builders often over-optimize the happy path. Production systems fail on the unhappy path. Instruction points: • Design error states and degraded mode before polishing the happy path. • Specify accessibility and responsiveness as requirements, not later cleanup. • Define AI-specific affordances: explainability, citation or source display, confidence handling, and user override. • Version design decisions alongside code. Done when: • Key journeys, negative flows, and accessibility expectations are specified. • AI output handling is explicit at the interface level..

Scene 15 (11m 53s)

Production-Grade AI Application Delivery with ChatGPT • Design artifacts are versioned and linked to implementation work. 5. AI/ML System Development Objective. Build reproducible data, training, prompt, evaluation, and promotion workflows so that model behavior can be improved without losing lineage. With ChatGPT. Use ChatGPT to draft dataset schemas, labeling guidelines, prompt libraries, feature definitions, experiment plans, and evaluation rubrics. Ask it to enumerate failure modes for each model class you use. For LLM applications, use ChatGPT to synthesize adversarial prompts, policy-violating cases, grounding failures, and structured output edge cases. Then turn those into eval datasets and regression suites. Production-grade implementation. Treat data, prompts, and models as versioned artifacts. DVC is useful because it ties large data and model artifacts to Git history while storing the heavy blobs in remote storage, and it can define reproducible pipeline DAGs [R12]. MLflow is useful because it tracks parameters, code versions, metrics, artifacts, and later model lineage in the registry [R13][R14]. For GenAI systems, keep prompt templates, tool schemas, retrieval settings, chunking policy, and guardrail rules under version control. Use MLflow or an equivalent evaluation system to compare runs and monitor GenAI quality over time [R15]. If you use OpenAI models, pinned model versions, reusable prompts, eval loops, and safety checks should be part of the pipeline, not ad hoc chat habits [R3][R4][R5]. The minimal MLOps loop for one person is: snapshot data, run a versioned training or prompt experiment, capture metrics and artifacts, evaluate against a fixed suite plus a growing error set, register a candidate model or prompt package, deploy only to staging, run online validation or shadow checks, then promote or reject. Never promote a model just because it “looked good” in one manual session. The combination of DVC for data lineage and MLflow for experiment and registry lineage.

Scene 16 (12m 58s)

Production-Grade AI Application Delivery with ChatGPT gives a solo operator enough structure to investigate drift, regressions, and bad releases without platform bloat [R12][R13][R14]. Scaling pattern for one person. Keep the model stack boring. Use pre-trained or API-served models unless you have a strong reason to own training or fine-tuning. The scarce resource in a one-person organization is not raw model access. It is verification bandwidth. Every extra model you own multiplies validation and incident load. ChatGPT should help you reduce that load by generating eval cases, reviewing label policy, and summarizing model deltas from one release to the next. Instruction points: • Version datasets, prompts, and model configs with stable identifiers. • Track every experiment with code version, data version, parameters, metrics, and artifacts. • Separate offline metrics from release criteria; use both. • Maintain a standing regression suite built from real failures. • Reject untracked manual “hero runs.” Done when: • Data lineage, experiment lineage, and registry lineage are recoverable. • Promotion requires documented eval thresholds and release evidence. • The system can answer: which data, prompt, code, and model version produced this output?.

Scene 17 (13m 45s)

Production-Grade AI Application Delivery with ChatGPT Figure 3. Unified CI/CD and MLOps pipeline. Promote the same artifact that was staged and evaluated. 6. Software Development Objective. Turn requirements and architecture into maintainable application code, API code, mobile code, infrastructure code, and operational code. With ChatGPT. Use ChatGPT as a paired implementation and review agent, not as an unquestioned code generator. Ask it for scaffolds, migrations, tests, refactors, typed interfaces, API clients, Android or iOS integration stubs, and pull request checklists. Then ask it to critique its own output against your repo conventions and security requirements. Prompt it with the existing code structure and style guide so it writes into the system you have, not the one it imagines. Production-grade implementation. A controlled repository is the center of gravity. Use a standard branch model: short-lived feature branches, protected main, signed or verified commits if practical, pull requests for all changes, semantic tagging for releases, and release branches only when you truly need parallel stabilization. Enforce pull request templates that require: scope, linked requirement IDs, test evidence, rollout plan, rollback plan, data migration notes, and security impact. Use CODEOWNERS to map critical paths to explicit responsibility, even if the only owner is you, because.

Scene 18 (14m 40s)

Production-Grade AI Application Delivery with ChatGPT it clarifies what areas are sensitive [R7]. Rulesets should require status checks, prevent force-push on protected branches, and protect release tags [R6]. For web and backend code, separate business logic from framework glue and from AI orchestration logic. For mobile and Android, separate platform integration, data sync, domain logic, and UI state. For AI-integrated systems, keep prompt-building and inference orchestration in modules that can be tested independently from the transport layer. Store prompts as files, not scattered string literals. Add linters, type checks, formatting, dead-code checks, and unit tests as mandatory status checks. Scaling pattern for one person. A solo builder should use templates aggressively: repository template, service template, API template, batch job template, Android module template, runbook template, postmortem template, eval template. ChatGPT can generate and refine these templates fast. Once they stabilize, stop re-inventing structure. Platform consistency is leverage. Controlled sameness is one of the few ways a single operator can scale. Instruction points: • Every change enters through a pull request, even when you are the only author. • Require lint, type, unit, and policy checks before merge. • Keep prompts, migrations, and infrastructure definitions versioned beside code. • Use templates to standardize service shape and documentation. • Ask ChatGPT to review diffs against project-specific rules before merge. Done when: • Repository rules and templates enforce basic engineering discipline. • Code structure mirrors architecture boundaries. • Operational metadata travels with the change request..

Scene 19 (15m 43s)

Production-Grade AI Application Delivery with ChatGPT 7. Security Engineering and Security Review Objective. Build a secure-by-default delivery path that catches common software, cloud, and AI security failures before release. With ChatGPT. Use ChatGPT to draft threat models, abuse cases, data-flow reviews, attack trees, secure design checklists, and remediation playbooks. Ask it to reason separately about application security, cloud identity, secrets handling, dependency risk, supply-chain risk, mobile device risk, and AI-specific risks such as prompt injection, unsafe tool use, data exfiltration through context windows, retrieval poisoning, jailbreak attempts, and excessive autonomy. Production-grade implementation. Use NIST SSDF as the software security baseline [R17]. For AI systems, map that baseline to NIST AI RMF and the Generative AI Profile so that reliability, misuse, bias, and human oversight are treated as first-class risks rather than afterthoughts [R18][R19]. On the repository side, enable code scanning, secret scanning, dependency review, and automated dependency updates where available [R11]. Dependency review is valuable because it can block risky new dependencies at pull request time; secret scanning helps catch leaked credentials across history; code scanning surfaces language-specific vulnerabilities and insecure patterns [R11]. Use GitHub Actions hardening guidance to pin third-party actions, minimize permissions, and isolate secrets [R10][R11]. Use OIDC for cloud auth to avoid long-lived credentials in CI/CD [R9]. Supply-chain integrity matters even for a one-person operator. Artifact attestations and SLSA-style provenance provide evidence of where and how artifacts were built [R10][R20]. The goal is not compliance theater. The goal is to make it possible to answer, after the fact, which workflow built a given artifact from which commit using which runner identity. For AI security, use OWASP ASVS for application controls and the OWASP LLM guidance for large-language-model specific risks [R21][R22]. Treat model-facing input as hostile. Sanitize tool arguments. Restrict outbound network.

Scene 20 (16m 48s)

Production-Grade AI Application Delivery with ChatGPT access where possible. Force structured outputs where possible. Keep high-impact operations behind explicit allowlists and human confirmation. Scaling pattern for one person. Replace impossible manual review with a security gate bundle. A merge is blocked unless repository checks pass. A release is blocked unless staging deploy plus security suite passes. A production promotion is blocked unless artifact identity, migration state, and rollback plan are present. ChatGPT can draft the checklists and threat model quickly. The enforcement must be in the platform. Instruction points: • Threat-model every external input and every high-impact action. • Use short-lived credentials and least privilege in CI/CD. • Enable code scanning, secret scanning, dependency review, and automated updates. • Treat prompts, tools, and retrieval sources as attack surfaces. • Record security exceptions with expiry dates. Done when: • Security controls run automatically in the delivery path. • Identity, provenance, and rollback evidence exist for releases. • AI-specific misuse and prompt/tool risks are explicitly mitigated. 8. Testing and Quality Assurance Objective. Make quality measurable, repeatable, and release-blocking across application code, infrastructure, and model behavior. With ChatGPT. Use ChatGPT to derive test cases from requirements, generate property-based cases, enumerate edge conditions, build mobile scenario matrices, and create failure-injection ideas. For LLM.

Scene 21 (17m 44s)

Production-Grade AI Application Delivery with ChatGPT features, use it to generate eval examples, hard negatives, structured output corruption cases, hallucination traps, tool misuse prompts, and multilingual or domain-shift cases. Production-grade implementation. Structure the quality stack in layers: unit tests, contract tests, integration tests, end-to-end tests, migration tests, performance tests, resilience tests, and AI evals. A one-person organization does not need dozens of test categories in name only; it needs a small set of tests that really gate releases. Contract tests are especially important because they protect the seams between web/mobile clients, APIs, background jobs, and model services. For AI systems, maintain at least four quality sets: gold set, adversarial set, regression set, and smoke set. The regression set should be fed continuously from real failures. OpenAI’s evaluation guidance emphasizes running and comparing evals continuously as changes are made [R3]. Adopt that idea even if your eval harness is modest. Scaling pattern for one person. Build a release scorecard. Each pull request or candidate release should produce a short machine-readable summary: requirement IDs touched, tests passed, performance delta, security findings, AI eval delta, migration impact, and rollback readiness. ChatGPT can summarize that scorecard into a human-readable release note, but the underlying scorecard should be produced automatically in CI. Instruction points: • Map every critical requirement to at least one test or eval. • Treat flaky tests as defects in the delivery system. • Separate fast gating tests from slower nightly or pre-release suites. • Keep a failure-derived regression set for AI behavior. • Block release on test evidence, not intuition. Done when: • The system produces a reproducible release scorecard..

Scene 22 (18m 49s)

Production-Grade AI Application Delivery with ChatGPT • Critical seams are protected by contract and integration tests. • AI behavior changes are measured against a standing eval set. 9. DevOps, CI/CD, and Controlled Release Engineering Objective. Build a deterministic path from commit to production with reusable workflows, controlled environments, and evidence artifacts. With ChatGPT. Use ChatGPT to draft workflow YAML, environment promotion logic, artifact naming standards, release checklist automation, changelog templates, and failure triage guides. Ask it to simplify the pipeline rather than make it more ornamental. The best solo-operator pipeline is the one you can still understand during an incident at 03:00. Production-grade implementation. The repository should contain a small set of reusable workflows: validate, build, test, security, package, deploy-staging, promote-production, and rollback. Use GitHub Actions environments to separate staging and production and to apply deployment protection rules where available [R8]. Give each workflow least-privilege permissions. Prefer OIDC-based cloud login to stored secrets [R9]. Use artifacts to persist build outputs and test evidence, then attach attestations or equivalent provenance evidence where supported [R10]. Store changelogs, migration plans, and deployment metadata as artifacts of the release, not as vague memory. The release path should be staged. A typical flow is: merge to main, run validation and packaging, deploy to staging automatically, run smoke tests plus AI evals plus migration checks, require an explicit promote step to production, then monitor for a fixed soak window. For one person, “promotion” can be your deliberate action in a protected environment, but the critical point is that the promoted artifact is exactly the staged artifact. Never rebuild between staging and production. That breaks provenance and makes rollback analysis harder. For mobile, use separate tracks or channels such as internal, beta, and production. For backend or web, use blue-green, canary, or percentage.

Scene 23 (19m 54s)

Production-Grade AI Application Delivery with ChatGPT rollout only when you can observe and roll back quickly; otherwise a simple staged full rollout with strong monitoring is often safer. Controlled repositories are the front gate. Use rulesets for branch and tag control, require status checks, prohibit direct pushes to protected branches, and keep release tags immutable [R6]. The repository becomes a control surface, not just a file store. Every deployment should trace back to a commit, a workflow run, an artifact digest, a set of checks, and a release note. This is what lets a single operator move quickly without losing accountability. Scaling pattern for one person. Start with one golden path. Do not create different CI/CD logic for every service unless scale demands it. Reusable workflows, shared action versions, common environment variables, common naming schemes, and standard promotion rules reduce cognitive load. ChatGPT can help draft the first version of that golden path. Then lock it down and evolve it through versioned changes. Instruction points: • Use reusable workflows and a single golden path. • Stage before production and promote the exact same artifact. • Persist build, test, and release evidence. • Authenticate CI/CD to cloud using federation, not static secrets. • Keep rollback one command or one workflow away. Done when: • Commit-to-release flow is deterministic and reproducible. • Staging and production are protected, distinct environments. • Release artifacts have traceable provenance and attached evidence..

Scene 24 (20m 52s)

Production-Grade AI Application Delivery with ChatGPT Figure 4. Controlled repository stack. Use platform controls to replace missing organizational layers. 10. Deployment and Runtime Configuration Objective. Release software and AI capability safely, with explicit config control, migration discipline, and rollback. With ChatGPT. Use ChatGPT to draft deployment runbooks, migration plans, config matrices, and rollback decision trees. Ask it to enumerate “what breaks if this config changes” before you change anything in production. Production-grade implementation. Separate application code from runtime configuration. Manage infrastructure with Terraform and runtime secrets in a dedicated secret manager. Keep environment variables minimal, typed, and documented. Database migrations should be versioned and tested in.

Scene 25 (21m 23s)

Production-Grade AI Application Delivery with ChatGPT staging with realistic data shapes. For AI systems, separate model or prompt package rollout from application rollout where possible so that you can change one without destabilizing the other. If prompt or retrieval configuration changes are operationally meaningful, treat them as releasable artifacts with version numbers and rollback instructions. Scaling pattern for one person. Use release manifests. Each production release should declare application version, artifact digest, database migration version, model or prompt version, feature flags changed, environment variables changed, and rollback target. ChatGPT can draft human-readable summaries from the manifest, though the manifest itself should be machine-readable and stored with the release evidence. Instruction points: • Separate code, config, and secret changes. • Test migrations before production and make rollbacks explicit. • Treat prompt and model changes as releasable units. • Use feature flags carefully and clean them up aggressively. Done when: • Runtime state is documented and reproducible. • Rollback targets are known before release. • Config drift is minimized through declarative control. 11. Monitoring, Observability, and Incident Response Objective. Make the running system inspectable enough that one person can detect, diagnose, and recover from failures quickly. With ChatGPT. Use ChatGPT to design alerts, dashboards, incident templates, and postmortem structures. Feed it traces, logs, or error summaries and ask it for probable root causes, affected.

Scene 26 (22m 21s)

Production-Grade AI Application Delivery with ChatGPT components, and next diagnostic steps. It is useful as an incident analyst, not as the incident commander of record. Production-grade implementation. Use OpenTelemetry-compatible instrumentation for traces, metrics, and logs so that application, infrastructure, and model-serving signals can be correlated [R16]. At minimum, instrument request latency, error rate, throughput, saturation, queue depth, external dependency failures, and deployment events. For AI systems, add model or prompt version tags, token usage where relevant, retrieval hit quality, fallback counts, moderation or guardrail events, and eval drift indicators. Separate service-level indicators from business-level indicators. The first tells you whether the system is alive. The second tells you whether the system is useful. Incident response for a one-person organization must be procedural. Keep runbooks for the top ten failure modes: auth outage, database saturation, bad deployment, secret leak, prompt regression, data pipeline break, model drift, cost spike, mobile release defect, and third-party API degradation. Define alert thresholds that page you only for meaningful risk. Over-alerting destroys solo operations because there is no second responder to absorb noise. Keep a deployment marker on dashboards so you can correlate incidents with changes. Scaling pattern for one person. Run a weekly review of deployment frequency, lead time, change failure rate, mean time to detect, mean time to recover, top error sources, top support issues, and top AI failure classes. ChatGPT can cluster incidents and draft problem-management tickets from those findings. This converts observability from passive data exhaust into an improvement engine. Instruction points: • Instrument traces, metrics, and logs with consistent service and version metadata. • Add AI-specific telemetry for model, prompt, retrieval, and fallback behavior. • Alert on symptoms that require action, not every abnormality. • Keep concise runbooks for known failure modes..

Scene 27 (23m 26s)

Production-Grade AI Application Delivery with ChatGPT • Review operational metrics weekly. Done when: • A bad release is detectable within minutes, not days. • Telemetry can be filtered by service version and model or prompt version. • Incident handling and postmortem generation are standardized. Figure 7. Illustrative operating metrics. Scale should increase release throughput while reducing lead time and failure rate. 12. Maintenance, Reliability Engineering, and Continuous Improvement Objective. Prevent the system from decaying into an unmaintainable artifact through routine upgrades, debt reduction, and reliability work. With ChatGPT. Use ChatGPT to generate maintenance plans, dependency upgrade notes, deprecation impact reviews, and refactor proposals. Ask it to categorize technical debt by risk: security debt, reliability debt, velocity debt, and architecture debt..

Scene 28 (24m 0s)

Production-Grade AI Application Delivery with ChatGPT Production-grade implementation. Schedule maintenance as first-class work. Dependabot or an equivalent updater should keep dependencies moving [R11]. Code scanning and dependency review should make dependency risk visible before it becomes a crisis [R11]. Maintain a debt register in the repository with owner, risk level, remediation path, and target date. Reliability engineering for a one- person organization means eliminating repeat toil. Every repeated manual fix is a candidate for automation, a guardrail, or a runbook improvement. Scaling pattern for one person. Keep a monthly “platform day” or equivalent cycle. Use it to rotate secrets where needed, review access, prune stale flags, retire dead code paths, archive obsolete datasets, refresh eval sets, and update runbooks. ChatGPT is effective at summarizing what changed across the estate and drafting maintenance tickets, which reduces the mental load of remembering everything yourself. Instruction points: • Treat reliability debt as scheduled work, not spare-time work. • Keep a debt register with severity and deadline. • Automate repeated maintenance and cleanup tasks. • Refresh evals and regression cases as product scope evolves. Done when: • Upgrades, debt, and maintenance are visible and time-boxed. • Repeated manual recovery patterns shrink over time. • Operational entropy is actively reduced. 13. Governance, Compliance, and Ethical Control Objective. Create a lightweight but defensible governance model that can survive scrutiny from customers, auditors, or regulators..

Scene 29 (24m 57s)

Production-Grade AI Application Delivery with ChatGPT With ChatGPT. Use ChatGPT to draft policy documents, risk register entries, model cards, decision logs, retention schedules, data processing notes, and review checklists. Ask it to map your system to NIST AI RMF functions or to internal policy categories. Ask it to identify evidence gaps: claims you are making without an artifact that proves them. Production-grade implementation. Governance is often misunderstood as bureaucracy. For a one- person organization it should be small, sharp, and artifact-driven. Maintain the following minimum set: system inventory, data inventory, access matrix, dependency inventory, release log, risk register, security exceptions log, incident register, postmortem archive, model or prompt change log, and retention/deletion policy. NIST AI RMF provides a practical structure for governing trustworthy AI through govern, map, measure, and manage functions [R18]. The Generative AI Profile extends that to GenAI-specific risks [R19]. Use those documents as a framing device, not as a ritual. Translate them into repo files, release gates, and operating reviews. Ethical control for AI systems means defining where automation is allowed, where explanation is required, where human override is mandatory, and what harms are unacceptable. Bias, privacy leakage, hallucination, unsafe advice, over-automation, and opaque ranking decisions are all governance topics. Put them in the risk register with concrete controls and review dates. Use model cards or prompt package cards to record intended use, excluded use, training or data assumptions, evaluation limits, and open risks. Scaling pattern for one person. Establish a weekly governance cadence: review open risks, recent releases, incidents, access changes, policy exceptions, and any AI behavior regressions. The output is a short evidence packet. ChatGPT can draft the packet, but the authoritative inputs must come from the repository, CI/CD, scanners, and observability stack. Governance is credible only when it is attached to evidence..

Scene 30 (26m 2s)

Production-Grade AI Application Delivery with ChatGPT Instruction points: • Keep governance artifact-driven and small. • Map AI risks to controls and review dates. • Record exceptions with explicit expiry. • Maintain model or prompt cards for significant AI releases. • Review access and risk registers on a fixed cadence. Done when: • Governance claims can be backed by versioned evidence. • AI risks are documented with controls and review dates. • Governance review becomes a repeatable weekly loop, not an annual panic..

Scene 31 (26m 24s)

Production-Grade AI Application Delivery with ChatGPT Figure 5. Weekly governance loop for a one-person organization. 14. Documentation and Knowledge Management Objective. Convert personal memory into durable system knowledge so the organization can scale beyond the founder’s working memory. With ChatGPT. Use ChatGPT to draft architecture docs, API docs, onboarding notes, runbooks, ADRs, test plans, and incident summaries. Ask it to compress long technical conversations into canonical docs with decisions, rationale, open questions, and next steps. Use Projects and connected tools or apps where appropriate to keep relevant materials discoverable within ChatGPT [R1][R2]. Production-grade implementation. Keep documentation in the repository or in a tightly connected docs system with version history. The minimum documentation set is: README, architecture overview, ADRs, environment guide, local setup, CI/CD overview, release process, runbooks, incident process, security baseline, data contracts, API contracts, model or prompt change log, and customer- facing limitations where relevant. Use docs-as-code conventions so changes to docs can be reviewed and linked to code changes. If a runbook is not versioned, it will drift at the exact moment you need it. Scaling pattern for one person. Adopt a documentation rule: every material change yields one permanent artifact. A feature creates or updates requirements and release notes. A platform change creates or updates an ADR. An incident creates a postmortem and runbook fix. A security issue creates a risk entry and control update. ChatGPT makes these artifacts cheaper to produce. The discipline is to actually commit them. Instruction points: • Prefer canonical docs over repeated chat explanations. • Version docs with code where operational coupling is tight. • Update runbooks after every incident or near miss..

Scene 32 (27m 29s)

Production-Grade AI Application Delivery with ChatGPT • Keep documentation discoverable and scoped by domain. Done when: • Core operating knowledge survives outside your memory. • Docs are linked to code, releases, incidents, and architecture decisions. • ChatGPT can operate as a useful reviewer because the knowledge base is explicit. 15. Product Lifecycle Management and Scaling Roadmap Objective. Manage the product as a living system from launch through iteration, expansion, and retirement. With ChatGPT. Use ChatGPT to synthesize customer feedback, cluster bug reports, propose roadmap options, estimate operational implications of new features, and draft deprecation plans. Ask it to rank roadmap items by user value, risk reduction, and maintenance burden rather than by novelty. Production-grade implementation. Run the product lifecycle on a small number of fixed loops: weekly triage, weekly release review, monthly platform review, monthly roadmap adjustment, quarterly architecture review, quarterly security and governance review. Maintain a product ledger that tracks features shipped, features retired, unresolved user pain, support burden, top reliability risks, and AI-specific error classes. Product scaling for a one-person organization means saying no more often, not adding parallel initiatives. Each new surface area adds maintenance, governance, and incident load. Design the roadmap in stages. Stage 1, establish the golden path: one repository, one deployment model, one observability stack, one release template, one AI eval harness, one risk register. Stage 2, harden and automate: better tests, more telemetry, more rigorous release evidence, DVC/MLflow lineage, fewer manual steps. Stage 3, specialize only where justified: split repositories or services, add more deployment patterns, introduce stronger policy controls, or add dedicated model serving when throughput, latency, or compliance demands it. The maturity roadmap in this handbook is illustrative,.

Scene 33 (28m 35s)

Production-Grade AI Application Delivery with ChatGPT though the direction is deliberate: governance, CI/CD, security, and observability should mature before architectural complexity. Scaling pattern for one person. Measure scale in control-adjusted throughput, not raw feature count. A healthy one-person product operation should increase release frequency while reducing change failure rate and lead time, as automation and guardrails improve. The point is not to mimic a large engineering org. The point is to reach a stable state in which the system can absorb growth without continuous heroics. Instruction points: • Keep a fixed operating cadence for product, platform, and governance reviews. • Prioritize work that improves control-adjusted throughput. • Retire features that cost more than they return. • Add complexity only after the current operating model is stable. Done when: • The product roadmap is constrained by measurable outcomes and operational load. • Maturity increases through automation and evidence, not ceremony. • The system can grow without multiplying chaos..

Scene 34 (29m 15s)

Production-Grade AI Application Delivery with ChatGPT Figure 6. Illustrative 30-180 day maturity roadmap. Governance, CI/CD, security, and observability should mature before architecture complexity. Appendix A. 30-60-90 Day Bootstrap Plan 30-60-90 day bootstrap plan for a one-person organization Days 1-10: 9. Create the repository template and top-level structure. 10. Enable protected main, rulesets, PR templates, issue templates, and release template. 11. Set up local dev container or reproducible local environment. 12. Implement lint, type, unit test, and basic build workflows. 13. Choose deployment target and configure infrastructure as code. 14. Stand up staging environment and deployment workflow. 15. Create the baseline docs set: thesis, requirements, architecture, ADRs, runbook skeletons..

Scene 35 (29m 51s)

Production-Grade AI Application Delivery with ChatGPT Days 11-30: 16. Add secret scanning, dependency review, code scanning, dependency update automation, and environment protections. 17. Wire OIDC cloud authentication. 18. Add OpenTelemetry instrumentation and a minimal dashboard pack. 19. Create release manifest schema and rollback workflow. 20. Establish data/model versioning and MLflow tracking if AI features are in scope. 21. Build the first eval harness and release scorecard. 22. Create the governance packet templates: risk register, incident register, exceptions log, access matrix. Days 31-60: 23. Expand integration tests, contract tests, and smoke tests. 24. Add release markers, soak windows, and production health checks. 25. Automate changelog generation, deployment evidence collection, and artifact retention. 26. Build the top ten operational runbooks. 27. Add a standing regression suite from real failures. 28. Review architecture for hotspots, cost drivers, and latency bottlenecks. Days 61-90: 29. Reduce manual steps until the golden path is dominant. 30. Tune alerts to reduce noise and improve detection quality. 31. Review least-privilege access and secret hygiene. 32. Improve model or prompt promotion controls and monitoring. 33. Run a tabletop incident drill and a rollback drill. 34. Produce the first quarterly architecture and governance review packet..

Scene 36 (30m 47s)

Production-Grade AI Application Delivery with ChatGPT Appendix B. Reusable ChatGPT Prompt Patterns Reusable ChatGPT prompt patterns Requirements normalizer “Given the attached product thesis and notes, rewrite the requirements into testable functional, non- functional, AI, and compliance requirements. Assign stable IDs. Flag every ambiguous term and suggest measurable wording. Output as markdown suitable for docs/requirements.” Architecture critic “Given these requirements and the current repository structure, propose three architecture options: lowest cost, lowest operational burden, and highest control. For each option, compare failure domains, deployment complexity, rollback complexity, data movement, secrets exposure, and observability burden. End with a recommended ADR draft.” Pull request reviewer “Review this diff against the following repository rules, threat model, and acceptance criteria. Identify security issues, hidden coupling, missing tests, rollback gaps, migration risks, and documentation gaps. Output a prioritized review checklist.” Test and eval generator “From these requirements, API contracts, and known failures, generate unit tests, integration tests, contract tests, negative-path tests, and AI eval cases. Separate release-gating tests from nightly tests. Output as a structured test plan.” Incident analyst “Given these logs, traces, metrics, release markers, and runbook summaries, propose likely root causes, affected components, immediate containment actions, and follow-up investigations. Distinguish evidence from hypothesis.”.

Scene 37 (31m 44s)

Production-Grade AI Application Delivery with ChatGPT Governance packet drafter “Using the attached release log, risk register, incident register, security findings, access changes, and AI eval deltas, draft a weekly governance packet with: major changes, open risks, exceptions nearing expiry, incidents, customer-visible impacts, and decisions required.” Appendix C. Definition-of-Done Spine Definition-of-done spine for solo production releases A change is not done when the code compiles. It is done when five conditions are all true. One, the requirement or defect being addressed is linked to the change. Two, tests and policy checks pass. Three, the release artifact is identifiable and reproducible. Four, the deployment and rollback paths are explicit. Five, the operational and governance records are updated. For AI-facing changes, add a sixth condition: the model, prompt, retrieval, or policy delta has been evaluated against the standing suite and the change is acceptable by threshold. Use a simple release checklist: • Requirement IDs linked. • Architecture or ADR updated if boundaries changed. • Migration reviewed and tested. • Release manifest generated. • Security findings triaged. • Eval delta reviewed for AI changes. • Runbook updated if operational behavior changed. • Rollback target recorded. • Observability or alert changes included if needed. • Release note generated from the scorecard..

Scene 38 (32m 38s)

Production-Grade AI Application Delivery with ChatGPT This definition of done is intentionally small. It is designed to be executable by one person every week, not admired and ignored. ChatGPT can prefill almost all of the human-readable parts. The delivery platform must enforce the machine-readable parts. Appendix D. Closing Principle Final operating principle A one-person organization can achieve production-grade delivery by making ChatGPT the acceleration layer and making the repository, CI/CD, MLOps, security, and governance stack the enforcement layer. Use the model to think faster, write faster, and review more broadly. Use deterministic systems to decide what is allowed to ship. When those roles are cleanly separated, solo execution becomes less fragile, more scalable, and far easier to audit or improve. References Primary and standards-oriented sources used to anchor the operating model in this handbook. 35. R1. Projects in ChatGPT. OpenAI Help Center. https://help.openai.com/en/articles/10169521- using-projects-in-chatgpt 36. R2. Apps in ChatGPT. OpenAI Help Center. https://help.openai.com/en/articles/11487775- connectors-in-chatgpt 37. R3. Evaluation best practices. OpenAI API docs. https://developers.openai.com/api/docs/guides/evaluation-best-practices/ 38. R4. Safety best practices. OpenAI API docs. https://developers.openai.com/api/docs/guides/safety- best-practices/ 39. R5. Prompt engineering. OpenAI API docs. https://developers.openai.com/api/docs/guides/prompt- engineering/.

Scene 39 (33m 43s)

Production-Grade AI Application Delivery with ChatGPT 40. R6. About rulesets / Available rules for rulesets. GitHub Docs. https://docs.github.com/en/repositories/configuring-branches-and-merges-in-your- repository/managing-rulesets/about-rulesets 41. R7. About code owners. GitHub Docs. https://docs.github.com/articles/about-code-owners 42. R8. Using environments for deployment. GitHub Docs. https://docs.github.com/actions/deployment/targeting-different-environments/using-environments- for-deployment 43. R9. OpenID Connect for GitHub Actions. GitHub Docs. https://docs.github.com/actions/security- for-github-actions/security-hardening-your-deployments/about-security-hardening-with-openid- connect 44. R10. Artifact attestations / Using artifact attestations. GitHub Docs. https://docs.github.com/actions/security-for-github-actions/using-artifact-attestations 45. R11. Code scanning, secret scanning, dependency review, and Dependabot. GitHub Docs. https://docs.github.com/en/code-security/getting-started/github-security-features 46. R12. DVC data versioning and pipelines. DVC Docs. https://doc.dvc.org/start 47. R13. MLflow Tracking. MLflow Docs. https://mlflow.org/docs/latest/ml/tracking/ 48. R14. MLflow Model Registry. MLflow Docs. https://mlflow.org/docs/latest/ml/model-registry/ 49. R15. Evaluating LLMs/Agents with MLflow. MLflow Docs. https://mlflow.org/docs/latest/genai/eval-monitor/ 50. R16. What is OpenTelemetry?. OpenTelemetry Docs. https://opentelemetry.io/docs/what-is- opentelemetry/ 51. R17. Secure Software Development Framework (SP 800-218). NIST. https://csrc.nist.gov/pubs/sp/800/218/final 52. R18. AI Risk Management Framework 1.0. NIST. https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100- 1.pdf.

Scene 40 (34m 48s)

Production-Grade AI Application Delivery with ChatGPT 53. R19. AI RMF: Generative AI Profile. NIST. https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600- 1.pdf 54. R20. SLSA specification and provenance. SLSA / Linux Foundation. https://slsa.dev/spec/v1.0/ 55. R21. OWASP ASVS. OWASP. https://owasp.org/www-project-application-security-verification- standard/ 56. R22. OWASP Top 10 for LLM Applications. OWASP. https://owasp.org/www-project-top-10-for- large-language-model-applications/ 57. R23. Terraform introduction. HashiCorp. https://developer.hashicorp.com/terraform/intro 58. R24. Docker documentation. Docker. https://docs.docker.com/.